Google has introduced new accessibility capabilities to assistants, maps, and search. For the purpose of aiding users in reading text in multiple places, the business is also launching a new software called Magnifier. Google Maps is incorporating screen reader support for the “search with live view” feature, empowering individuals with visual impairments to utilize their mobile phones for identifying nearby places like ATMs and public transport stations.

Google is enhancing accessibility by introducing improvements to its maps, search, and assistant services.These enhancements are crafted to give individuals facing visual impairments and mobility challenges increased autonomy and ease in their daily activities. In addition, users are able to look up wheelchair-accessible shopping routes using Google Maps. Google is working on adding disabled-owned businesses to maps and search results. Google is now adding wheelchair-accessible information for parking lots and restrooms to maps for Android Auto.

Locations with wheelchair-accessible features will showcase a wheelchair icon, indicating a barrier-free entrance. Action block functionality is already integrated into Android devices, allowing convenient access to everyday actions, such as calling someone or adjusting room temperature, through widget-like blocks directly on the home screen. The company is expanding its capacity to generate personalized blocks for assistant routines. On the home screen, users have the option to resize or add a picture to a shortcut.

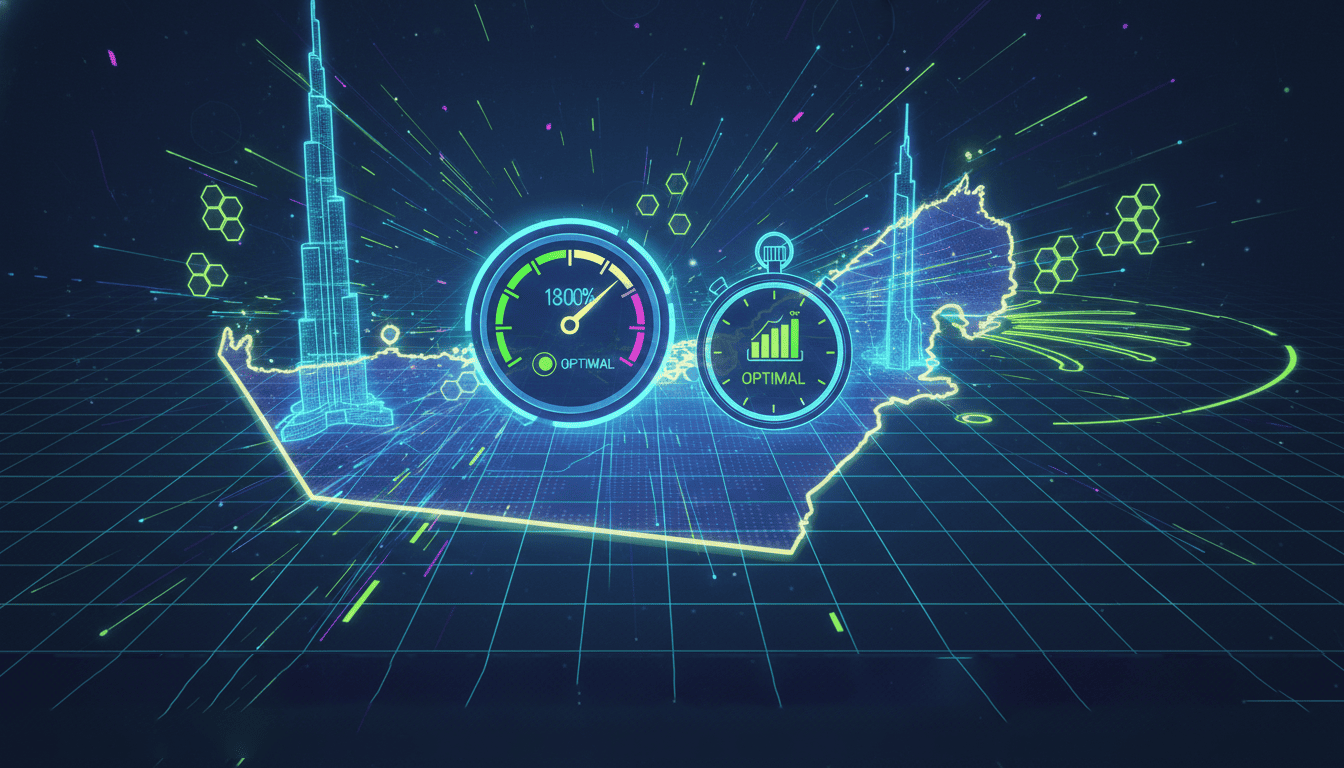

Google released a new function earlier this year that allows Chrome on desktops to automatically suggest URLs, even when users type errors in their search query. The business is now adding this feature to Chrome for iOS and Android. Today, the company is unveiling several accessibility updates tailored specifically for pixel devices. The leading technology company unveiled a new magnifier application developed in partnership with the Royal National Institute of Blind People and the National Federation of the Blind. This app is designed to assist users in reading text more effectively, specifically on items such as menus and street signs.

People can choose to modify the contrast or brightness of an image, adjust the contrast, or zoom in to improve the readability of text. Google mentioned that the zoom functionality proves beneficial for observing performers on a distant concert stage as well. By freezing the screen, the app also makes it easier to view signs that are constantly changing, such as flight information at airports. The application can be used on Pixel5 and newer models.

Google is improving Guided Frames, an app designed to assist blind or visually impaired individuals in taking photos by incorporating haptic feedback, high-contrast animations, and audio guidance. During the Pixel 7 event last year, the app was first unveiled by the firm. The most recent version. The most recent version can identify items like text, food, and pets. Users can use the new software on their front or rear camera to capture images of various scenes. The Pixel 8 and Pixel 8 pro users can already access this update, while Pixel 6 and later models will receive it later this year.

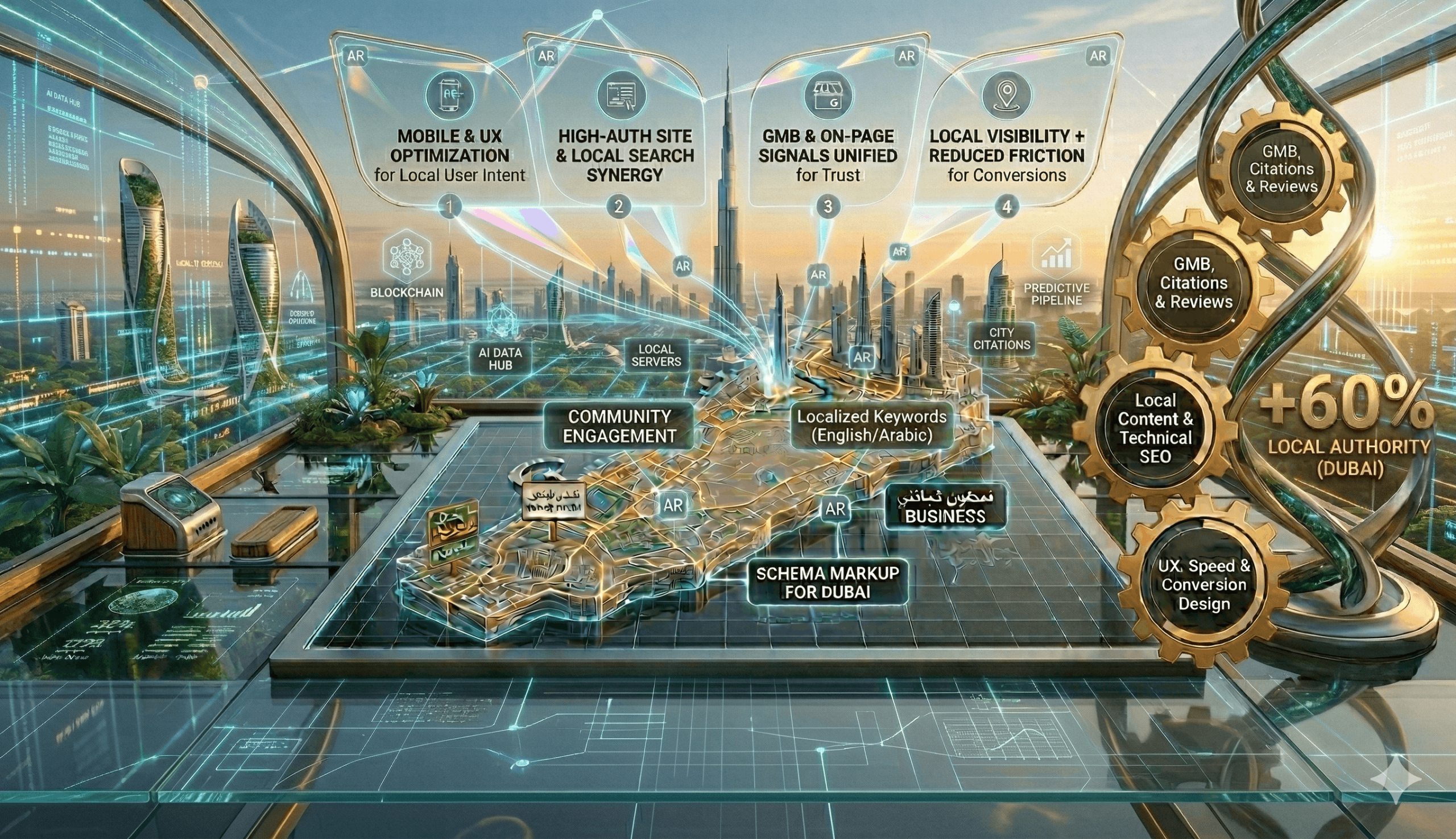

Google is enhancing guided frames, an app that provides haptic feedback, high-contrast animations, and auditory advice to assist individuals who are blind or visually challenged in taking images. Google has integrated artificial intelligence (AI) and augmented reality (AR) into the lens in maps feature, enhancing users’ ability to explore new locations using their phone’s camera. Action Blocks-inspired features now allow users to further tailor their routines. You may customize your Routines shortcut with your own photos, change the shortcut’s design, and change how big it appears on the home screen. Google believes that a wider variety of users will find Assistant Routines even more beneficial with this customizable capability.